The Problem

- Standard infant vision exams (like observing if a baby follows objects or using cards) are slow, subjective, and often imprecise.

- Subtle vision problems (e.g., mild lazy eye or focus issues) can go undetected until a child is older and able to cooperate with traditional tests, missing a crucial early treatment window.

- Exams for toddlers can be stressful and inefficient – keeping a young child’s attention is hard, and lengthy appointments often yield incomplete or unreliable results due to fatigue or fussiness.

The Solution

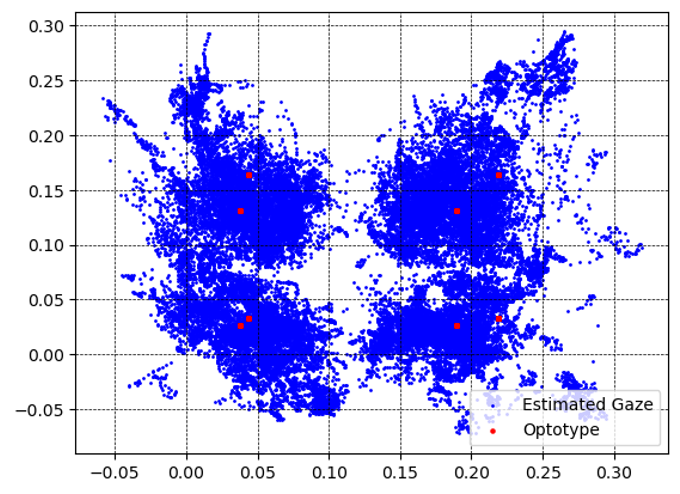

- Developed a tablet “game” that displays high-contrast shapes (optotypes) and uses the device’s front camera with OpenCV to track the infant’s eye movements in real time.

- The app employs an adaptive algorithm: it shows a shape and detects if the baby’s gaze fixes on it. If the child looks, the shape gets smaller next round; if not, it gets bigger – homing in on the smallest visible size (like a digital eye chart).

- The testing process is mostly automated – the examiner just starts the test and the system adjusts stimulus size, logs each response, and determines the infant’s visual acuity threshold without requiring verbal input from the child.

Architecture Overview

- Device & Vision Components: An iPad with its camera runs the app; OpenCV-based gaze detection identifies whether the infant looks at each shown shape within a set time window.

- Adaptive Staircase Algorithm: The app’s logic dynamically adjusts the optotype size up or down based on the infant’s responses, following a staircase strategy to pinpoint the smallest discernible shape.

- Minimal Manual Interaction: The interface is simple for clinicians – enter basic patient info and tap start. The system handles presenting stimuli and deciding when to stop (once acuity threshold is found), while logging all gaze responses.

- Data Logging: Every stimulus presentation and outcome is recorded internally. These logs can be analyzed (in Python) to fine-tune parameters (e.g., how long to display shapes, how long a “meaningful gaze” must last) and improve future test versions.

- Prototype Platform: Built as an iOS app (Swift) with integration of OpenCV libraries. The design emphasizes engaging visuals (simple shapes, sounds) to maintain the baby’s attention and uses on-device processing for immediate feedback.

Results and Impacts

- Early prototype testing showed exam times reduced by roughly one-third, making the vision test much faster and less taxing for infants and parents.

- Provided quantitative eye exam results for infants (e.g., exact smallest shape recognized), replacing subjective judgments with objective metrics that can be tracked over time.

- Promises earlier identification of vision issues (like amblyopia or high refractive errors) in babies, allowing interventions (glasses, patching) to begin during a crucial developmental window rather than waiting years.

Skills and Tools Used

| Technique/Skill | Tools/Implementation |

|---|---|

| Skill/Tool Category | Application in Pediatric Vision AI — Early Screening |

| Computer Vision (OpenCV) | Real-time face and eye detection to track infant gaze on an iPad camera feed; customized for infant face proportions |

| Mobile App Development | Built an iOS app in Swift, integrating camera APIs and OpenCV frameworks for on-device processing |

| UX/UI Design (Toddlers) | Created a baby-friendly interface with high-contrast shapes and engaging feedback; timed presentations to align with infant attention spans |

| Data Logging & Analysis | Implemented in-app logging of stimuli and responses; used Python (pandas) offline to analyze gaze response patterns and adjust algorithm thresholds |

| Usability Testing (Agile) | Conducted iterative tests with infants and gathered feedback from pediatric ophthalmologists, rapidly refining the prototype in short development cycles |

| Clinician Collaboration | Worked closely with eye specialists to translate gaze-tracking results into familiar clinical terms (approximate Snellen equivalents) and ensure trust in the new method |

Cross-Project Capabilities

- Human-centered design of AI tools: this project shows the importance of tailoring technology to end-users (babies and clinicians), a user-first approach that Dr. Tuli applies when designing clinician dashboards or patient-facing AI tools in other projects.

- Real-time signal processing: experience gained in processing video feedback within seconds parallels his work in streaming data analysis for outbreak detection and ICU monitoring, demonstrating proficiency in developing time-sensitive analytics.

- Rapid prototyping & stakeholder buy-in: by building a compelling prototype with clear “wow factor,” he secured stakeholder support – a tactic he repeated in other initiatives (e.g., quick demos for the mother-infant dashboard) to accelerate funding and adoption.

Published Papers/Tools

- Prototype Documentation: “Vision Ninja – Validation Prototype Definition Notes (Oct 2022)” – an internal design and results report used to brief the project team and hospital innovation leaders.

- Conference Abstract: Prepared “Gaze-Tracking for Infant Visual Acuity: Feasibility Study” for submission to a vision science conference (ARVO), aiming to present the methodology and early results to the research community.

- Prototype App & Demo: The Vision Ninja iPad app (prototype) was demonstrated internally via a video and live demos, helping the team win a FastTrack Innovation in Pediatric Healthcare 2022 award. (Not yet a public app, but recognized in-house for its innovative approach.)